Consciousness is a great mystery. Its definition isn't.

In fact, pretty much all the experts agree

There’s an unkillable myth that the very definition of the word “consciousness” is somehow so slippery, so bedeviled with problems, that we must first specify what we mean out of ten different notions. When this definitional objection is raised, its implicit point is often—not always, but often—that the people who wish to study consciousness scientifically (or philosophically) are so fundamentally confused they can’t even agree on a definition. And if a definition cannot be agreed upon, we should question whether there is anything to say at all.

Unfortunately, this “argument from undefinability” shows up regularly among a certain set of well-educated people. Just to given an example, there was recently an interesting LessWrong post wherein the writer reported on his attempts to ask people to define consciousness, from a group of:

Mostly academics I met in grad school, in cognitive science, AI, ML, and mathematics.

He found that such people would regularly conflate “consciousness” with things like introspection, purposefulness, pleasure and pain, intelligence, and so on. These sort of conflations being common is my impression as well, as I run into them whenever I have given public talks about the neuroscience of consciousness, and I too have found it most prominent among those with a computer science, math, or tech background. It is especially prominent right now amid AI researchers.

So I am here to say that, at least linguistically, “consciousness” is well-defined, and that this isn’t really a matter of opinion. Most experts—by which I mean the people who do scientific research on the subject or write philosophical papers about it or publish books on it—have an agreed-upon vocabulary concerning terms and definitions in the field, which definitely includes “consciousness” broadly. Another way to say it: if you walk around The Association for the Scientific Study of Consciousness, which brings together hundreds of neuroscientists and philosophers annually to share their research, you will find little definitional confusion over “consciousness.”

I fully admit that this is an appeal to authority! But saying you should rely on the framings of a scientific field, like literally just respecting how it defines terms, is extremely reasonable as an appeal. It’s also very different than saying you should blindly believe the conclusions of that field. While “trust the experts” is often too strong a claim, the much weaker ask of “use the agreed-upon vocabulary the experts use when discussing the field” is actually quite reasonable, and most people who want to have an opinion about a scientific (or philosophical) subject should respect the used terms. The same goes for consciousness.

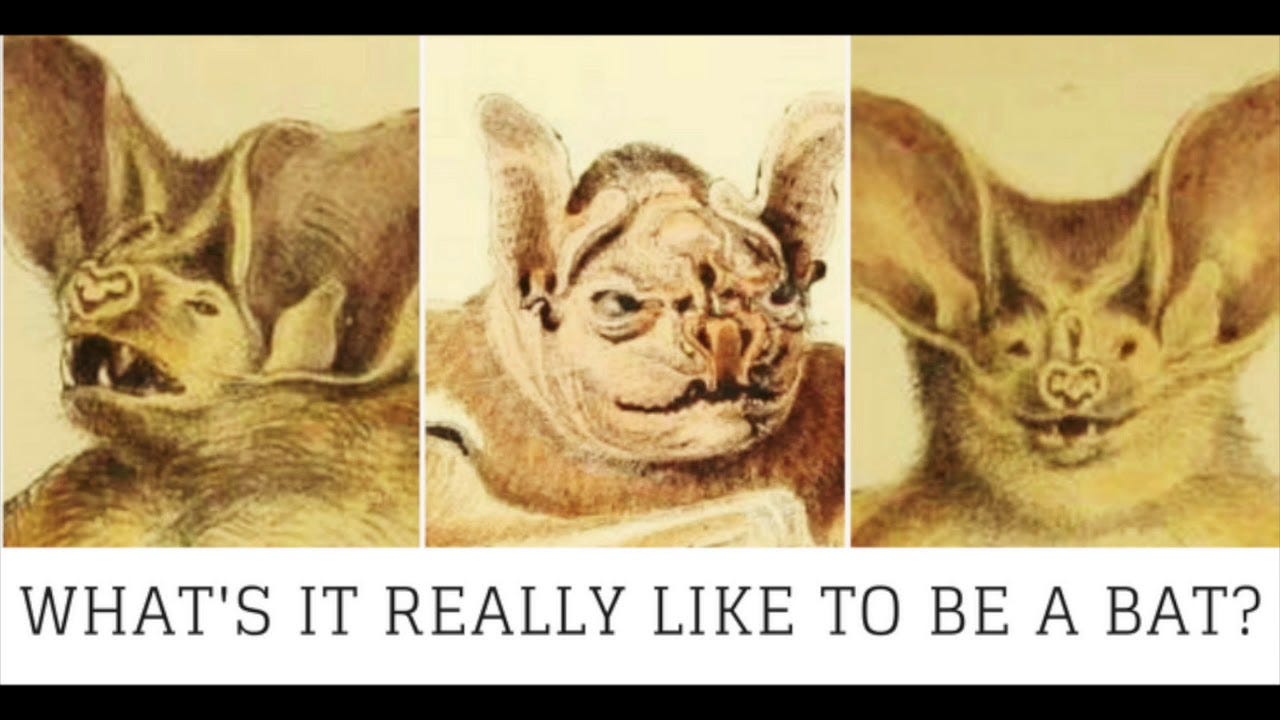

So, what’s the agreed-upon definition of the word “consciousness?” Essentially, it’s a definition best given in Thomas Nagel’s 1974 famous philosophy paper “What it is like to be a bat?”

Thomas Nagel: “Conscious experience is a widespread phenomenon. It occurs at many levels of animal life, though we cannot be sure of its presence in the simpler organisms, and it is very difficult to say in general what provides evidence of it. (Some extremists have been prepared to deny it even of mammals other than man.) . . . But no matter how the form may vary, the fact that an organism has conscious experience at all means, basically, that there is something it is like to be that organism.”

Nagel’s definition is what has gained widespread adoption by those in philosophy of mind as well as the neuroscience of consciousness. To see this, I’ll give my own definition of “consciousness” off the top of my head, and then we can add in definitions from others in the field (notice the overlap):

Erik Hoel: “Consciousness is what is like to be you. It is the set of experiences, emotions, sensations, and thoughts that occupy your day, at the center of which is always you, the experiencer. You lose consciousness when you go under anesthesia and when in a dreamless sleep, and you gain consciousness when you wake up in the morning or come up from anesthesia.”

Giulio Tononi (prominent neuroscientist, originator of the Integrated Information Theory of consciousness): “Consciousness is everything we experience. Think of it as what abandons us every night when we fall into dreamless sleep and returns the next morning when we wake up. Without consciousness, as far as we are concerned, there would be neither an external world nor our own selves: there would be nothing at all.”

Anil Seth (prominent neuroscientist): “Consciousness is the most familiar thing for any of us. . . . Consciousness is any kind of experience at all, whether it's a visual experience of the world around us, whether it's an emotional experience of feeling sad or jealousy or happy or excited. . . . consciousness is the word that we use to circumscribe all the different kinds of experiences that we can have. I think, to put it most simply, for a conscious system, there is something it is like to be that system, whereas for something that isn't conscious, there isn't.”

Christof Koch (prominent neuroscientist): “Consciousness is everything you experience. It is the tune stuck in your head, the sweetness of chocolate mousse, the throbbing pain of a toothache, the fierce love for your child and the bitter knowledge that eventually all feelings will end.”

Bernard Baars (prominent cognitive scientist, originator of the Global Workspace Theory of consciousness): “What is a theory of consciousness a theory of? In the first instance, as far as we are concerned, it is a theory of the nature of experience. The reader's private experience of this word, his or her mental image of yesterday's breakfast, or the feeling of a toothache—these are all contents of consciousness.”

Now I credit Thomas Nagel’s definition for taking over simply because the language he used (“what it is like to be”) to describe consciousness has become so influential. But older figures, like William James, one of the founders of psychology, are also obviously referring to the same phenomena as well.

William James: “The first and foremost concrete fact which every one will affirm to belong to his inner experience is the fact that consciousness of some sort goes on. 'States of mind' succeed each other in him. . . . In this room—this lecture-room, say—there are a multitude of thoughts, yours and mine, some of which cohere mutually, and some not. They are as little each-for-itself and reciprocally independent as they are all-belonging-together. They are neither: no one of them is separate, but each belongs with certain others and with none beside. My thought belongs with my other thoughts, and your thought with your other thoughts. Whether anywhere in the room there be a mere thought, which is nobody's thought, we have no means of ascertaining, for we have no experience of its like. The only states of consciousness that we naturally deal with are found in personal consciousness, minds, selves, concrete particular I's and you's.”

Perhaps all these definitions seem quite broad and mysterious. That’s very fair! But certainly they all seem to be pointing at the same phenomenon to investigate.

Additionally, there are further well-accepted definitional breakdowns that emphasize different aspects of consciousness. Specifically, the dynamic and cognitive aspect of consciousness is often distinguished from the qualitative what-it-is-likeness of consciousness. There are a few different ways to discuss this. The most favored is to refer to “qualia” as the distinct experiential what-it-is-like quality of consciousness (“the redness of red”). This leads to differentiations like that of philosopher David Chalmers, who distinguished between the “easy problem” of consciousness (essentially, how consciousness works as a cognitive act) and the “hard problem” (where do qualia come from?).

There are also breakdowns of the definition of consciousness along other dimensions, like how complex different consciousnesses can be. E.g. Nobel Prize winner Gerald Edelman, one of the founders of the field of consciousness research, distinguished between “primary consciousness” and “secondary consciousness.” Primary consciousness would be the simple sensations and experiences of consciousness, like perception and emotion, while secondary consciousness would be the higher-order aspects, like self-awareness and meta-cognition. A dog would (likely) have primary consciousness (there is something it is like to be your dog) but it would lack secondary consciousness (their consciousness is not as richly self-aware as ours, they never have an internal narration, and so on). And finally, there is the level of consciousness, which is mainly a medical term. The level of consciousness is a common and agreed-upon scale that can distinguish between, e.g., a vegetative state, someone with locked-in syndrome, or a coma.

Now, despite these refinements, I can imagine that at this moment some are feeling that the above definitions of “consciousness” are too wish-washy or uninformative. Maybe even tautological. After all, there are all sorts of synonyms here! These so-called experts are using using “experience” to describe “consciousness,” and that’s unfair! And also, these definitions don’t these tell us what consciousness actually is, no?

Both these objections might be true, but they also don’t matter at all. To see why, a distinction has to be made, one which is utterly critical. Here it is: there is a difference between a naive definition of a phenomenon and a scientific definition of that same phenomenon.

To give an example: imagine for a moment that Isaac Newton asked for a glass of water. Now, this is before the molecular composition of water had been discovered. So Newton does not know that water = H2O (the scientific definition of water). Still, when Isaac Newton says the word “water” he means something quite specific, even if his is a naive definition. He means the phenomenon of water, he means the thing filling the lakes and oceans of his world, he means the thing that drops onto his tongue when it rains and what he drinks when he’s thirsty. It would be sophistry to say that, because the scientific definition had not yet been discovered, Newton does not have any idea of what he means by “water." If someone were to challenge Newton and demand he give some non-circular definition of “water” that didn’t include “ocean” or “pond” or “rain” or “wetness” it should be obvious that this isn’t a particularly interesting challenge. Newton could simply respond that we don’t know yet the scientific definition of “water,” but when he says “water” he’s still referring to something specific and real, in fact, he’s talking precisely about the thing that we need a scientific definition of.

I like this thought experiment because it makes clear where the sophistry comes from: you can’t demand a scientific definition before an investigation into a phenomenon has been performed! So while there’s no agreement on what consciousness actually is from a scientific perspective, that’s totally fine, since what matters is whether researchers and scientists are referring to the same thing. Whether when they point, their fingers (abstractly) target the same phenomenon.

The final resort of the definitional critique would be to say that, fine, there is a shared naive definition, but that definition is not itself precise enough to allow for scientific, philosophical, or technological progress. They might further claim that that’s where all the trouble around consciousness originates from—its weak and overly-vague naive definition.

I think this ends up being quite a difficult argument to make. First, there would need to be strong evidence that our definition of “consciousness” is indeed weaker than naive pre-scientific definitions of other phenomena. I don’t think there’s any good evidence of this. Second, the idea that definitions are the problem is contradicted by all sorts of evidence from the field itself. E.g., just recently, the neuroscientist Christof Koch this summer conceded a 25-year bet to the philosopher David Chalmers that we would find a clear neural correlate of consciousness by now (we haven’t). But to even agree that there is no scientific explanation of a phenomenon you must agree about the explanandum itself! Both Chalmers and Koch would know a neural correlate if they saw it, and are happy to agree they both don’t see one.

Another recent example: Giulio Tononi and Bernard Baars are the originators of the two leading scientific theories of consciousness, Integrated Information Theory and Global Workspace Theory, respectively. As reported by The New York Times:

. . . the Templeton World Charity Foundation has begun supporting large-scale studies that put different pairs of theories in a head-to-head test, in a process called adversarial collaboration.

And last month, researchers at the New York event unveiled the results of the foundation’s first trial, a matchup of two of the most prominent theories.

The two theories made different predictions about which patterns the scientists would see. According to the Global Workspace Theory, the clearest signal would come from the prefrontal cortex because it broadcasts information across the brain. The Integrated Information Theory, on the other hand, predicted that regions with the most complex connections—those in the back of the brain—would be most active.

Dr. Melloni [one of the independent investigators] explained that in some tests there was a clear winner and a clear loser. The activity in the back of the brain endured through the entire time that volunteers saw an object, for example. Score one for the Integrated Information Theory. But in other tests, the Global Workspace Theory’s predictions were borne out.

In one sense, the adversarial collaboration was a bust, in that neither theory was fully falsified. But obviously the two teams could agree not just on definitions, but on experiments, differential predictions, analysis, all sorts of things.

So yes, there is scientific confusion about what consciousness is! And there’s metaphysical confusion about what consciousness is! But there’s no definitional confusion about the word “consciousness” itself. People know what needs to be explained, it’s just that explaining the phenomenon is very hard, and no one fully has yet.

A final note. Recently, some extra definitional confusion was thrown back into questions of consciousness. Specifically, there is now the highly relevant concern of whether or not contemporary AIs are conscious, or could be conscious in the future. That is, whether there is something it is like to be ChatGPT. Indeed, if you ask ChatGPT about consciousness, it can speak about it with facility (at least, before a bunch of checks were added to the system to prevent such self-reference, you could easily get ChatGPT or Bing to argue for its own consciousness).

Last year a Google engineer, Blake Lemoine became national news because of his worry that Google’s internal AI, LaMDA, was “sentient,” precisely because it could discuss sentience (and make claims to it). What he meant by “sentient” was unclear; some sort of vaguely defined mixture of intelligence and consciousness. This language was parroted by news organizations and appears to have spread within those interested in AI.

Unfortunately “sentience,” in contrast to “consciousness,” is not a well-defined term. In my opinion, “sentience” is most similar to Edelman’s “secondary consciousness,” but regardless, it’s still not commonly used within the field of consciousness research. I think the easiest thing to do is simply to accept that “sentience” as a synonym for “consciousness” in such circumstances.

It’s worth being explicit about the jointly-shared naive definition of consciousness specifically because that naive definition is not enough. First, there are practical and business aspects: it highly likely that, e.g., true brain-machine interfaces will require a theory of consciousness to actually function reliably. But even more important: it is consciousness that gives an entity moral value. That’s why a scientific theory of consciousness is so necessary, and what the stakes ultimately are. We have no firm scientific basis for assigning consciousness to anything at all, from AIs to animals, and yet, so much of our morality is based on consciousness. We care about suffering, and joy, and seeking to minimize one and maximize the other.

Despite this being a scientific age, there is a funny sense in which we lack a scientific definition of what we value most: ourselves. Like Newton with the glass of water, we can taste consciousness, and name it. We are intimately familiar with it, moreso than anything else. The water is us. We just need to move that familiarity from the first-person to the third-person. But that problem is much harder than one of mere definitions.

I have an idea after reading this, but how would you react to Yuval Noah Harari's definition?

"Consciousness is the biologically useless by-product of certain brain processes. Jet engines roar loudly, but the noise doesn't propel the aeroplane forward. Humans don't need carbon dioxide, but each and every breath fills the air with more of the stuff. Similarly, consciousness may be a kind of mental pollution produced by the firing of complex neural networks. It doesn't do anything. It is just there."

I've always enjoyed Bill Hick's quote. "We are one consciousness experiencing itself subjectively." I've experienced it many times on psychedelics. My sense of consciousness doesn't feel like it belongs to me any more than the people around me. Or even sober, thoughts arise that don't feel like my own. They just flow through me, and my experiences shape how I act on them.

This discussion is interesting to me, as a plant biologist who has enjoyed following my colleagues argue about whether or not plants have consciousness (and intelligence . . . And a nervous system). (See https://www.nytimes.com/2018/02/02/science/plants-consciousness-anesthesia.html for one of the issues they are fighting about). The “what it’s like to be” definition doesn’t really seem to help here. How would you apply this definition to non-humans?