Excuse me, but the industries AI is disrupting are not lucrative

Gemini and the supply paradox of AI

Another day, another huge new AI model revealed. This time it’s Google’s Gemini. The demo video earlier this week was nothing short of amazing, as Gemini appeared to fluidly interact with a questioner going through various tasks and drawings, always giving succinct and correct answers.

Yet in reaction to the announcement Google’s stock only got a couple percentage bump—a minimal response to supposedly being one step closer to artificial general intelligence (AGI), the holy grail of AI. Perhaps because, while the video makes it seem like the AI is watching the person’s actions (like the viewer is) and reacting in real-time, that’s. . . not what’s going on. Rather, they pre-recorded it and sent individual frames of the video to Gemini to respond to, as well as more informative prompts than shown, in addition to editing the replies from Gemini to be shorter and thus, presumably, more relevant. Factor all that in, Gemini doesn’t look that different from GPT-4, scoring only slightly better on batteries of tests, and GPT-4 gives much the same answers to photos taken from the video. It was the stitching together of an illusion.

A synecdoche of the industry’s current state as a whole. It was only one month ago that OpenAI held a DevDay launch, unveiling with pomp a novel “GPT store” of apps. The presentation projected the image of an ascendant Sam Altman acting as the heir to Steve Jobs. In retrospect, the presentation was a high-water mark, or at least, some sort of local peak, for the company and the AI industry as a whole. Just several weeks later, the news that the board fired Sam set the internet ablaze, and led to increasingly speculative reporting on backroom maneuvers of the non-profit turned for-profit.

Continued hype is necessary for the industry, because so much money flowing in essentially allows the big players, like OpenAI, to operate free of economic worry and considerations. The money involved is staggering—Anthropic announced they would compete with OpenAI and raised 2 billion dollars to train their next-gen model, a European counterpart just raised 500 million, etc. Venture capitalists are eager to throw as much money as humanely possible into AI, as it looks so revolutionary, so manifesto-worthy, so lucrative.

Yet, who precisely is the waiting audience for the GPT Store? I haven’t seen it mentioned much, if at all, on social media. Even news stories about the GPT Store post-announcement are scarce, except a reveal it’s been delayed to 2024. I did, however, find a slideshow from Gizmodo of the current kinds of things they expect will be in GPT store, based on current GPTs. Here’s the first one:

While I have no idea what the downloads are going to be for the GPT Store next year, my suspicion is it does not live up to the hyped Apple-esque expectation.

And listen. We all know that, barring further infighting and coups, neither OpenAI, nor Anthropic or any of these leading players, are in any real immediate economic danger in the short term. That’s absolutely not what I’m saying. People in the industry are used to criticisms, which too often are some academic finger-wagging warning that AI will never work, that artificial general intelligence (like anything resembling a human’s) is impossible, or so on. Right now Waymo’s self-driving cars are outperforming humans, at least in the sense of getting into 76% less accidents. And given their test scores, I’m willing to say GPT-4 or Gemini is smarter along many dimensions than a lot of actual humans, at least in the breadth of their abstract knowledge—all while noting even leading models still have around a 3% hallucination rate, which stacks up in a complex task.

A more interesting “bear case” for AI is that, if you look at the list of industries that leading AIs like GPT-4 are capable of disrupting—and therefore making money off of—the list is lackluster from a return-on-investment perspective, because the industries themselves are not very lucrative. What are AIs of the GPT-4 generation best at? It’s things like:

writing essays or short fictions

digital art

chatting

programming assistance

The proposed GPT Store is a lot of versions of this, and these are also the use cases that high-profile investors are explicitly bullish about. Here’s from Andreessen Horowitz’s “The Economic Case for Generative AI:”

As a motivating example, let’s look at the simple task of creating an image. Currently, the image qualities produced by these models are on par with those produced by human artists and graphic designers, and we’re approaching photorealism. As of this writing, the compute cost to create an image using a large image model is roughly $.001 and it takes around 1 second. Doing a similar task with a designer or a photographer would cost hundreds of dollars (minimum) and many hours or days (accounting for work time, as well as schedules). Even if, for simplicity’s sake, we underestimate the cost to be $100 and the time to be 1 hour, generative AI is 100,000 times cheaper and 3,600 times faster than the human alternative.

Fine. Maybe all true (although using GPT-4 to do something more significant can cost serious money very quickly, even just for simple tasks). The issue is that taking the job of a human illustrator just. . . doesn’t make you much money. Because human illustrators don’t make much money! While you can easily use Dall-E to make art for a blog, or a comic book, or a fantasy portrait to play an RPG, the market for those things is vanishingly small, almost nonexistent. Look at this percent drop in copywriting and editing that followed ChatGPT’s release:

It’s sad for the freelancers, obviously, but what exactly is OpenAI gaining from the -10% market “disruption” of freelancer salaries? Not much in the grand scheme of an 86 billion dollar company. Yet Horowitz’s reasoning continues along these lines, imagining replacing other industries as well:

A similar analysis can be applied to many other tasks. For example, the costs for an LLM [Large Language Models like GPT-4] to summarize and answer questions on a complex legal brief is fractions of a penny, while a lawyer would typically charge hundreds (and up to thousands) of dollars per hour and would take hours or days. The cost of an LLM therapist would also be pennies per session. And so on.

Again, even assuming that’s all technologically possible, these examples, law and mental health, are extremely difficult to disrupt for structural reasons—not just because the vast majority of people want a final human overseer, but because a whole host of regulations, traditions, and legalities stand in the way. In the next decades, lawyers might increase their use of AI, but AI is absolutely not going to replace lawyers entirely, nor will it significantly siphon off their collective salaries. Again, this isn’t an argument about capabilities! Maybe GPT-7 will be the best lawyer on the planet on a technical level of creating really strong briefs. It’s instead an argument about licensing, trust, and established systems that AI somehow needs to fit into.

Human-preferenced bottlenecks exist even in the more creative fields that contemporary AIs excel at, industries Horotwitz implies are going to be very lucrative for AI companies:

Generative AI models are incredibly general and already are being applied to a broad variety of large markets. This includes images, videos, music, games, and chat. The games and movie industries alone are worth more than $300 billion.

Could AI hoover up a substantial portion of that $300 billion? Well, Hollywood just outlawed the use of AI writing due to the writer’s strike. Actors aren’t going anywhere. Directors aren’t going anywhere. CGI might use AI techniques, but I have a hard time understanding where the massive value extraction is going to come from here. How precisely will AI capture a portion of the $300 billion movie and game market?

Perhaps investors could bet on some sort of insurgent AI anonymous creators using AI to create literally better movies than Hollywood and, not only that, ensure their new product’s distribution in ways that make a lot of money, somehow displacing the Hollywood distribution apparatus of big stars, streaming deals, expensive media blitzes, and global theater releases. . . but that’s a wild bet.

While I personally wouldn’t go so far as to describe current LLMs as “a solution in search of a problem” like cryptocurrency has famously been described as, I do think the description rings true in an overall economic/business sense so far. Was there really a great crying need for new ways to cheat on academic essays? Probably not. Will chatting with the History Buff AI app (it was is in the background of Sam Altman’s presentation) be significantly different than chatting with posters on /r/history on Reddit? Probably not. A big portion of users pay the $20 to OpenAI for GPT-4 access more for the novelty than anything else.

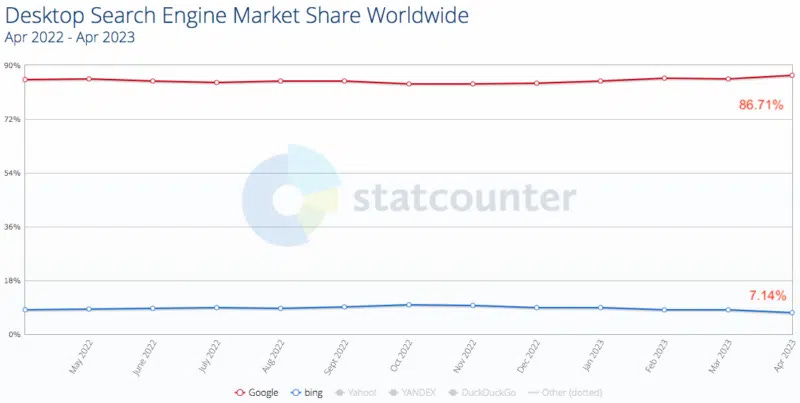

Search is the most obvious large market for AI companies, but Bing has had effectively GPT-4-level AI on offer now for almost a year, and there’s been no huge steal from Google’s market share.

What about programming? It’s actually a great expression of the issue, because AI isn’t replacing programming—it’s replacing Stack Overflow, a programming advice website (after all, you can’t just hire GPT-4 to code something for you, you have to hire a programmer who uses GPT-4, since the mere process of describing what you want is complex and so on). Even if OpenAI drove Stack Overflow out of business entirely and cornered the market on “helping with programming” they would gain, what? Stack Overflow is worth about 1.8 billion, according to its last sale in 2022. OpenAI already dwarfs it in valuation by an order of magnitude.

The more one thinks about this, one notices a tension in the very pitch itself: don’t worry, AI isn’t going to take all our jobs, just make us better at them, but at the same time, the upside of AI as an industry is the total combined worth of the industries its replacing, er, disrupting, and this justifies the massive investments and endless economic optimism. It makes me worried about the worst of all possible worlds: generative AI manages to pollute the internet with cheap synthetic data, manages to make being a human artist / creator harder, manages to provide the basis of agential AIs that still pose some sort of existential risk if they get intelligent enough—all without ushering in some massive GDP boost that takes us into utopia (Goldman Sachs apparently expects AI to start noticeably impacting GDP only by 2027).

Now, obviously proponents of investment in these companies will say all this is only the beginning. What about AI personal assistants? Robot butlers? All those things! Even assuming all that comes true sometime over the next decades: what is the market for personal assistants? What’s the market for butlers? Most people have neither of those things. People talk about “overhangs” in AI a lot, but not enough talk about how Silicon Valley in its excitement is creating an overhang wherein what these AIs can do vastly outstrips any deployed economic use we have for them. Additionally, much like cryptocurrency, investments in AI are susceptible to potential economic “black swans” waiting to happen, particularly around copyright (like if AI companies become forced to pay for the right to use copyrighted material to train their models, which Andreessen Horowitz is indeed worried about).

If the AI industry ever goes through an economic bust sometime in the next decade I think it’ll be because there are fewer ways than first thought to squeeze substantial profits out of tasks that are relatively commonplace already. E.g., in one sense, you might say that the ability to answer medical questions about symptoms is worth a huge amount of money—just look how much it costs to educate, or just visit, a doctor! But in another sense, you might say that the ability to answer medical questions is worth almost nothing, since an educated person can achieve similar sleuthing about symptoms using Google or Reddit, and usually diagnosis is either (a) not the problem, while treatment is, or (b) diagnosis requires further testing to differentiate hypotheses, which the AI can’t do. We can just look around for equivalencies. The payment for humans working as “mechanical turks” on Amazon are shockingly low. If a human pretending to be an AI (which is essentially what a mechanical turk worker is doing) only makes a buck an hour, how much will an AI make doing the same thing?

Is this just a historical quirk of LLMs like GPT-4 (and related spin-offs) being the first kind of “foundation model” that gets made, and therefore being good writers / chatters / artists / question-answerers and not much else? In other words, is it just a quirk of the current state of technology, or something more general?

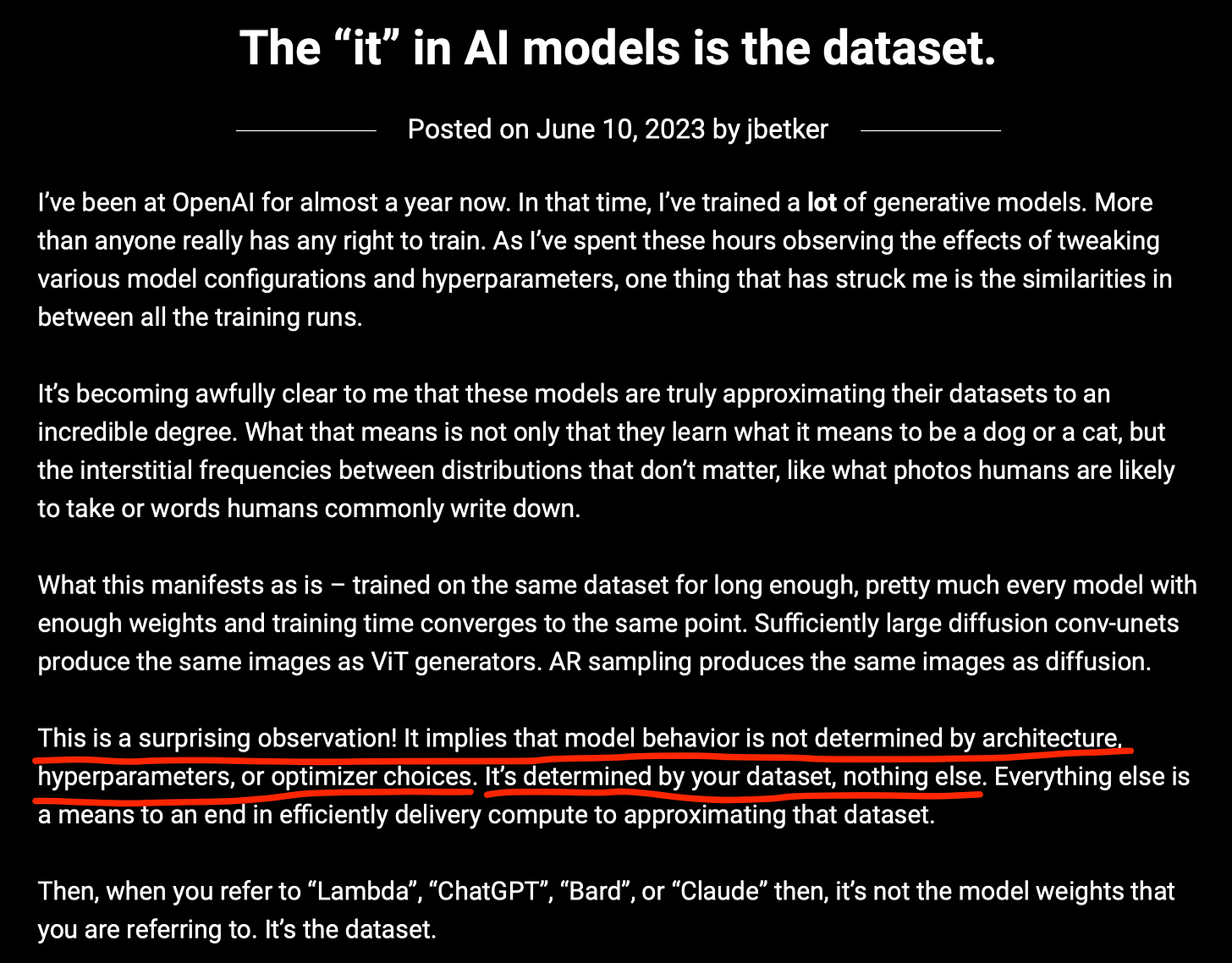

I think that this paradox of impressive intelligence being associated with less impressive money-making ability might be an unavoidable theme for AI, at least for now, simply because the success of contemporary AIs is based almost entirely on the massive size and quality of the data sets used to train them. Here’s from an employee at OpenAI, the kind of engineer who actually builds these systems, expressing that the success of a model is entirely inherent to the available data set:

What’s written on the internet is a huge “high quality” training set (at least in that it is all legible and collectable and easy to parse) so AIs are very good at writing the kind of things you read on the internet. But data with a high supply usually means its production is easy or commonplace, which, ceteris paribus, means it’s cheap to sell in turn. The result is a highly-intelligent AI merely adding to an already-massive supply of the stuff it’s trained on. Like, wow, an AI that can write a Reddit comment! Well, there are millions of Reddit comments, which is precisely why we now have AIs good at writing them. Wow, an AI that can generate music! Well, there are millions of songs, which is precisely why we now have AIs good at creating them.

Call it the supply paradox of AI: the easier it is to train an AI to do something, the less economically valuable that thing is. After all, the huge supply of the thing is how the AI got so good in the first place.

While I don’t think the supply paradox of AI is some sort of economic iron law (if there even is such a thing), it might hold truer than many of those investing in the space would like. Like all things that initially appear as an infinite gold mine, it may turn out not to be for complicated downstream reasons. So I urge investors throwing money at anything that moves: be wary! AI might end up incredibly smart, but mostly at things that aren’t economically valuable.

Like, you know, writing.

Please note: scammers are impersonating me (and other authors) on Substack to send suspicious links. I will never ask you to join a telegram or send me any information. If you got a reply comment saying as such, know that it's not real and is a common kind of scam.

What I keep coming back to is that language is just a bridge. It's a bridge between your experience and my experience. I can understand what you are saying only because elements of my experience correspond to elements of your experience and language forms a bridge between them. This leads to two thoughts:

1. AI is trained on language, not experience. It is trained on bridges, not on solid ground. No wonder it hallucinates.

2. I don't want content, I want communication. Human existence is fundamentally lonely. We have access only to our own mind. Everyone else is a stranger to us. Except when we communicate. Knowing that there is a human being with human experience on the other side of the bridge is the only reason I am interested in the bridge at all. A bridge to nowhere, no matter how bright and shiny it may be, and no matter how swiftly is may be built, is still a bridge to nowhere, and I'm not interested.

Which leads to the further thought that the notion that it can disrupt the content industry by building shinier bridges faster misses the point that we never wanted bridges in the first place. What we wanted was a way to cross to the other side. A bridge is of value only if there is something I want on the other shore. And the thing I want is a human being.