We Consciousness Researchers Have Failed You

In Which I Become Shackleton

So Michael Pollan’s latest book, A World Appears: A Journey into Consciousness, has been recommended to me by almost everyone at this point.

A breakout hit of a nonfiction book?

About my beloved topic, consciousness?

Oh god, I barely made it through.

Experienced sensations while reading: frustration, dread, restless legs, and overwhelming waves of weariness. At one point I felt physically nauseous.

I’ve been trying to figure out why, since (a) Michael Pollan is a great writer who has proven his chops over countless other topics, and (b) this is objectively quite a good book about the science of consciousness. Indeed, I should be happy! Consciousness is clearly having “a moment” right now—a science book about consciousness has been on The New York Times bestseller list for nine weeks, and meanwhile, the online world is abuzz with debates about AI consciousness.

And yet… I hated Pollan’s book.

I felt that every next chapter or section could have been predicted by some statistical machine for producing books about consciousness (“Okay, here’s the part about David Chalmers coming up”). And yes, I have the advantage of being a researcher in the same subject and have even worked with some of the figures Pollan writes about, which is why in my own The World Behind the World (we all seem to gravitate to the same titles, huh) I broadly told much the same story. But you can even go back to science journalist John Horgan’s The Undiscovered Mind, published in 1999, to get similar progress beats and quite familiar names. It’s been 27 years, during which the discussion has (as many fields of science do) centered around major figures like neuroscientists Christof Koch or Giulio Tononi or Antonio Damasio or philosophers like David Chalmers. There’s always the part where Alison Gopnik makes an appearance. Karl Friston pops his head in. And all these people are intellectual titans. Truly. But honestly, this stage of consciousness research feels played out.

Like you have Christof Koch, one of the highest-profile figures, who broke open the field in the 1990s with Francis Crick (co-discoverer of DNA’s structure) and gave one of the first proposals for a neural correlate of consciousness: gamma oscillations in the ~40Hz range in the cortex.

Koch, who is soon to turn seventy, was for a while after the death of Francis Crick a staunch supporter of Integrated Information Theory (I was part of the team that worked on developing that theory after Giulio Tononi proposed it, and even once did a conference submission with Koch himself). But now Koch has apparently moved on to other approaches to consciousness, mentioning his attendance of an ayahuasca ceremony and his accessing of a “universal mind.”

Here’s Pollan talking to Koch at the end of the book:

When I confessed to Koch my fear—that after my five-year journey into the nature and workings of consciousness, I somehow knew less than I did when I started—he simply smiled.

“But that’s good,” he said. “That’s progress.”

No, it isn’t!

Consciousness is not here for our personal therapy. It’s not tied to our life journeys. And I’m guilty of all that artsy and personal stuff too! But it’s no longer about how the grand mystery makes us feel, or the friends we made along the way.

It’s all changed.

HOW WE FAILED

Right now, there’s some college student falling in love with a chatbot instead of the young woman who sits next to him in class, all because science literally cannot tell him that the chatbot is lying about experiencing love. On the other hand, if somehow AIs are conscious, either right now (to some degree), or near-future ones will become so, then they deserve rights and protections, and the entire legal and social apparatus of our civilization must expand rapidly to include radically different types of minds (or we must choose to restrict what kinds of minds we create). There are immediate practical matters here. Long term, we also need to protect against extremely bad futures where only non-conscious intelligences remain—the worst of all possible worlds is that our civilization acts like a reverse metamorphosis, where something weaker but more beautiful, organic consciousness, gets shed in the birth of some horrible star-devouring insect made of matrix multiplication. And then it turns out there is nothing it is like to be two matrices multiplying.

While it’s my opinion that modern LLMs operate more like tools right now, or at best like a lesser statistical approximation of what a good human output would be (with their main advantage being search, not insight), this is all just the beginning of the technology. The door is open and will never be closed again.

Of course, consciousness matters far beyond just AI. Table stakes for actual scientific progress on consciousness include shifting neuroscience and psychiatry from pre-paradigmatic to post-paradigmatic sciences (and all the pile-on effects from that). This was always true. But my point here is that LLMs act like a forcing function. Before everything changed, consciousness research was an unhurried subfield of neuroscience that was always a little weird and niche; therefore academics are guilty of treating consciousness like an academic exercise. A stance that was somewhat excusable even just five or six years ago. Sure, becoming a neuroscientist or cognitive scientist or philosopher like Koch who makes a living trying to figure out consciousness is not exactly easy. Securing a paying position is hard, but once there, the actual academic research on consciousness is a somewhat cushy gig. Other people’s lives aren’t immediately on the line in the way they are for cancer research, and the ideas are fun to discuss on podcasts, and no opinion can really be proven wrong. So I think many of us have been guilty of thinking, deep down, that nothing will ever fully get solved, and so working on consciousness basically means getting to talk about interesting stuff, promoting your pet theory of the moment at conferences, writing some papers that only a few other researchers really read, maybe stuffing some undergrads in a brain scanner and making some colorful figures, getting jealous about funding and petty about who is on what committee, and basically just career-maxxing the decades away. There’s been a lot of that.

But having a massive hole in humanity’s scientific worldview is Actually Bad and our ignorance has led to instability and uncertainty and now, actual danger, and consciousness researchers need to Get Off Their Collective Asses and actually solve this problem. So it’s pretty important to ask:

WHY HAS CONSCIOUSNESS RESEARCH MADE SO LITTLE PROGRESS? OR, WHY DO WE SUCK?

I think there’s a pretty common defeatist view that goes something like this:

Consciousness is an impossible problem that no one has any idea about, in fact, people even struggle to define the word, and it’s a problem humanity has worked on for 2,000 years without any real resolution.

Almost every word of that is wrong.

Yes, scientific confusion about the ultimate nature of consciousness abounds! But there’s very little definitional confusion about consciousness, and that’s a big hurdle that’s been cleared. I’ve written about this before:

In the actual academic field, we are all talking about the same internal Jamesian stream with mostly the same phenomenology and structure, the same bound-together flow of sensory experiences and thoughts, i.e., the feeling of being you that begins at the start of the day when you wake from a dreamless sleep. There’s a lot of pointing at the same thing. Just practically, if a pre-scientific definition of “consciousness” were so impossible, it’d be pretty weird to have such a clearly defined “consciousness genre” of academic papers, conferences, and pop-sci books. If definitions undermined the whole thing, you’d expect Pollan’s book and Horgan’s book and my book to all be wildly varying—but if you read them, it’s pretty clear they’re not. I don’t find any remaining uncertainty beyond that surprising. Why would a pre-paradigmatic science start with perfect definitions? It’s just a run-around, asking for crystalline definitions of “electricity” prior to Maxwell, or “disease” prior to germ theory.

Another thing that’s wrong with the defeatist idea is the claim that humanity has worked on consciousness for 2,000 years. In fact, modern science has spent surprisingly little time trying to crack consciousness at all, thanks to a time I dubbed the “consciousness winter” of the 20th century.

Due to the rise of behaviorism and logical positivism, “consciousness” became a dirty word in science for half a century or more—precisely when the rest of the sciences rocketed ahead! The consciousness winter only really ended in the 1990s because of the collective weight of several Nobel Prize winners (like Francis Crick and Gerald Edelman) determined to make it acceptable again.

The two major scientific conferences (which are how scientists organize) devoted to consciousness also only started in the mid-90s. That’s just 30 years ago! Modern science is incredibly powerful, maybe the most powerful force in existence, but in the grand scheme of things, 30 years is not long at all. That’s just one generation of scientists and thinkers. Kudos to them. Pretty much all of the big names (including definitely Koch) deserve their laurels, and contra Pollan, I do think consciousness actually has made progress over the last 30 years, in that our conceptions are a lot cleaner, the definitional problem is pretty much solved, a lot of the space of initial possible theories is mapped, the problems and difficulties are much better known and clearly outlined, and there is organizational and behind-the-scenes structure that exists in the form of established conferences and labs and minor amounts of funding, etc.

And that’s another thing: no one has tried throwing money at the consciousness problem, at all—and for many problems, from AI to cancer cures, a necessary component often ends up being finance and scale and concentrating talent.

Humanity spends something like a billion dollars a year on CERN. To compare, let’s look at the biggest scientific funder in the United States, the NIH. Out of 103,280 grants awarded to scientists during the 2007-2017 decade, want to guess how many were about directly studying the contents of consciousness?1

Five.

That’s probably, at most, a couple million dollars in funding over a decade. Total. So if you’re a consciousness researcher, what can you do, cheaply? What can you do, for free? You can pontificate. You can propose your own theory of consciousness! That requires no funding whatsoever. And so for 30 years the meta in consciousness research has been to create your own theory of consciousness. We’ve let a thousand flowers bloom. The problem is that, if any flower is at all true or promising, you can’t identify it, as its sweet subjectivity-solving scent is completely masked by the bunches of corpse flowers around it. We have too many flowers, and one more just isn’t meaningful anymore. As is sometimes said at the end of fairy tales: “Snip, snap, snout. This tale’s told out.”

What we need are efforts at field-clearing, and methods that can actually make progress on consciousness in ways not tied to just promoting or trying to find evidence for some pre-chosen pet theory—which means finding ways to select over theories, to test theories en masse, so you don’t reinvent the wheel each time, and, perhaps most importantly, you have to do all this while scaling institutions with funding to specifically get a bunch of smart people in a room working together on this.

ME GETTING OFF MY ASS

If the 2020s were all about intelligence, then necessarily the 2030s will be all about consciousness. Intelligence is about function, while consciousness is about being, and forays and progress into understanding (and shaping) function will in turn force our attention toward a better understanding of being. And if the answer to “Why has consciousness not been solved?” is secretly “Material and historical conditions made it hard for anyone to actually try!” then the answer is to actually try.

I refuse to live in a civilization where we consciousness researchers have so obviously failed. I refuse to live in a civilization where we cannot tell consciousness from non-consciousness. Where we can offer no guidance for the future. Where we cannot explain the difference between actually experiencing things vs just processing them. In the short term, this is destabilizing and harmful. In the long term, it may be literally existentially dangerous.

I mentioned this effort briefly a while back, but if you want a longer update as to what I’m personally doing about this issue, there’s a recent Popular Mechanics article on my new research institute Bicameral Labs, designed to make an attempt at solving consciousness.

There’s a lot more to Bicameral Labs than this (here are some abridged excerpts from Popular Mechanics in a footnote2 ) but I thought my own current personal position was worth sharing, since the dire state of consciousness research is becoming widely known: it is very possible a lot of the problems are just material ones of effort and funding and organization.

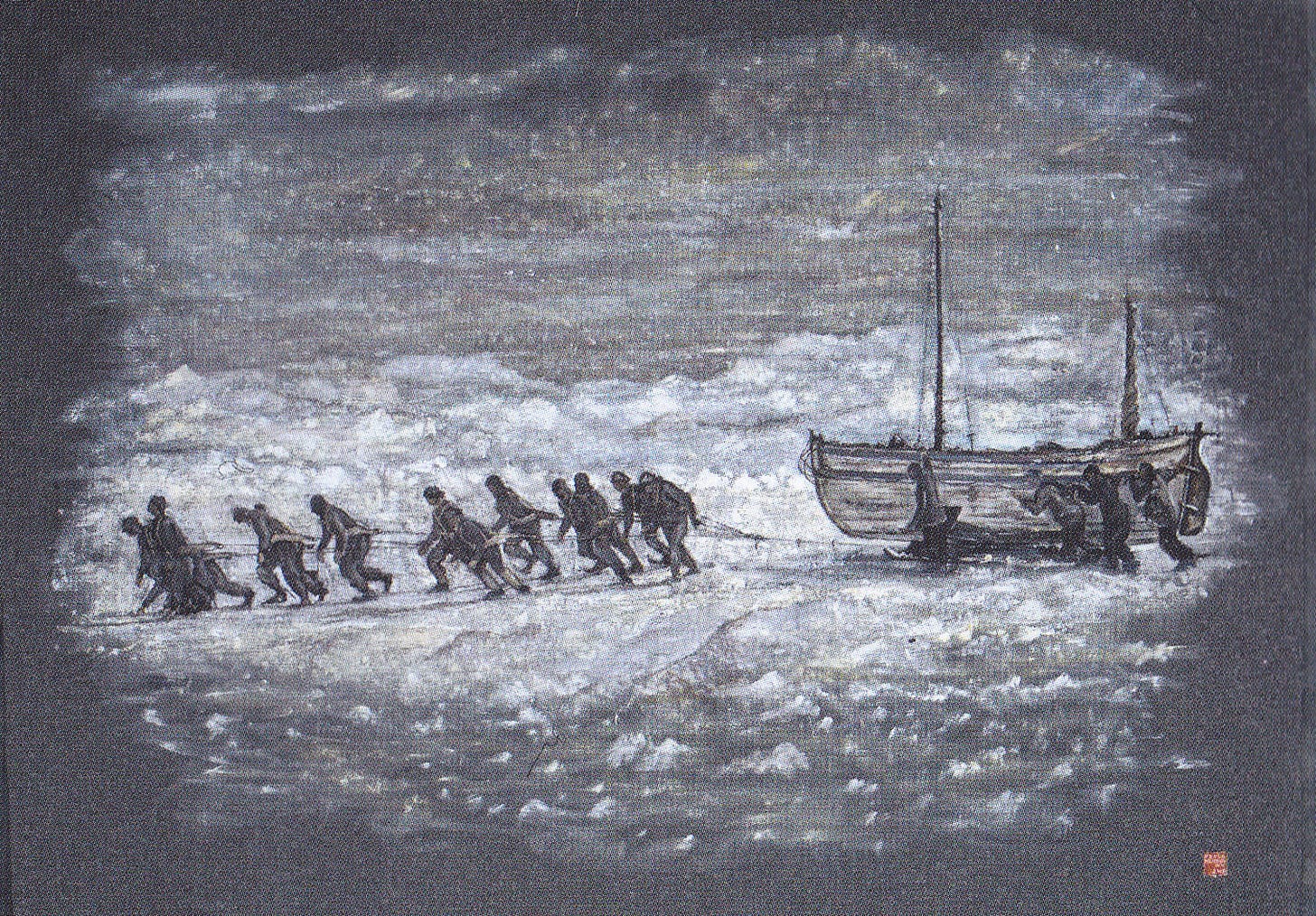

That is, if you zoom out, all the failures around consciousness, and the defeatist attitudes in response (mysterianism, illusionism, definitional deflation) can be viewed as a kind of learned helplessness after a few short decades of modern scientific research with zero serious funding. In the recent surprisingly bellicose words of Nvidia CEO Jensen Huang, these kinds of beliefs are “loser attitudes” and “I didn’t wake up a loser.” In fact, lately, when I do wake in bed each morning I find I have not rested, but feel I have run in the night and still have the blood of small animals in my mouth. One changes within to become the person needed at that time, at that moment, whether one wants it or not. Older spirits of adventure stir in my veins. Suddenly, I have become Shackleton, in desperate need of foresighted financiers and able-bodied men and women, young and ambitious, for a hard journey into icy lands unknown.

There are also 12 grants by the NIH during the 2007-2017 period about “states of consciousness” vs. the 5 for “contents of consciousness,” but from what I can tell, states of consciousness includes more clinically-focused research on subjects like anesthesia, so they were likely not as directly relevant.

The Popular Mechanics article is behind a paywall, and I don’t want to leak the whole thing, but here’s an abridged relevant excerpt, since it’s quite a good description:

The first step is to stop treating those theories as sacred ideas and start treating them like claims that must survive crash tests. Hoel’s main tool is the “substitution argument.” His background in Integrated Information Theory (IIT)—a model developed by Tononi that links consciousness to how information is integrated in a system—helped shape his focus on how to test such theories.

Imagine one system that sees the color green and says “green.” Now build a second system that behaves exactly the same—same inputs, same outputs, and comes up with the same answer to the color—but uses a very different internal architecture. If a theory says the first system is conscious but the second is not, Hoel asks a pointed question: why? Both systems did the same job, so a theory needs a clear scientific reason for saying one is not conscious. If it can’t provide that reason, Hoel thinks the theory starts to crack.

Any theory that cannot make predictions, survive testing, or risk failure gets cut…. He calls it “logical judo”: build mathematically precise substitutes, expose contradictions, then eliminate weak contenders. The goal is not to crown a winner overnight. Hoel wants fewer flowers—and stronger survivors.

“Do you know how hard it is to say that something is not conscious?”

Before labs, algorithms, and philosophical combat, there was a small independent bookstore that his mother ran, and Hoel worked there as a child. Surrounded by shelves, stories, and long afternoons of reading, he first wanted to become a writer. Then, in college, he realized he had an aptitude for science, which eventually led him to consciousness research, including work on how consciousness may shift across different levels of the brain. During graduate school, he wrote a murder mystery built around the science of consciousness. In it, a character wonders whether you could solve the puzzle not by staring directly at awareness, but by tracing its outline.

Hoel compares this image to drawing in negative space—sketching everything around an object until the object finally appears. “Couldn’t you just draw the negative space?” he recalls thinking. Today, that old intuition sits at the heart of Bicameral. Instead of claiming to know exactly what consciousness is, he believes science may now have the tools to hunt it indirectly, using logic, falsifiability, and systematic elimination—until the surviving shape reveals itself.

Mysterianism might be defeatist but it might also be right. It certainly remains viable.

If someone wants to become involved in the solution as you describe it, most likely via Bicameral Labs, what's the best way to do so?